In large-scale AI clusters, bandwidth scaling is no longer just a network upgrade problem — it directly impacts GPU utilization, training efficiency, and infrastructure cost. A hyperscale cloud provider deploying NVIDIA H100 GPUs in early 2025 faced a familiar challenge: how to double fabric bandwidth without doubling fiber complexity, switch count, and power consumption.

By moving from 400G optics to 800G OSFP DR8 modules with breakout connectivity, the engineering team significantly reduced cabling complexity while increasing network scalability for future AI workloads. This is exactly why 800G OSFP has rapidly become the dominant form factor for AI and hyperscale networking.

If you are planning an 800G migration, comparing different 800G OSFP module types, or verifying switch platform compatibility, this guide is for you. We cover every 800G OSFP variant, NVIDIA ecosystem compatibility, power and thermal considerations, breakout configurations, real-world deployments, and the roadmap to 1.6T — with the technical depth network engineers require.

Need 800G OSFP modules tested for your platform? Explore our 800G OSFP catalog for SR8, DR8, FR8, 2xLR4, and VR8 variants.

An 800G OSFP transceiver is a hot-pluggable optical module designed to deliver 800 gigabits per second of aggregate bandwidth through eight electrical lanes operating at 100G PAM4 signaling.

OSFP stands for Octal Small Form-factor Pluggable, where “Octal” refers to the module’s eight electrical lanes. Each lane operates at 100Gbps using PAM4 modulation at 53.125 Gbaud, aligning with IEEE 802.3ck electrical interface standards.

Compared with earlier 400G optics, 800G OSFP modules provide:

Because of its larger mechanical design and integrated thermal architecture, OSFP is particularly well suited for:

The OSFP MSA Rev 5.0 defines the mechanical, electrical, and management interfaces for 800G OSFP modules, ensuring multi-vendor interoperability across switches, NICs, and transceivers. Modules comply with CMIS 4.0 or 5.0 for diagnostics and control via I2C, monitoring key parameters such as temperature, voltage, TX/RX optical power, and pre-FEC BER.

Designed for high-power operation, the standard OSFP module measures approximately 22mm × 107mm and features an integrated heatsink. It supports up to 20W under typical airflow conditions — significantly higher than QSFP-DD800.

All 800G OSFP modules are built around an 8x100G PAM4 electrical architecture. Each electrical lane carries 100 Gbps using four-level pulse amplitude modulation (PAM4), which doubles the data per symbol compared to non-return-to-zero (NRZ) signaling. The result is 800 Gbps of aggregate throughput from the host ASIC to the optical module without requiring 16 physical lanes.

Optical implementations vary by module type:

While both OSFP and QSFP-DD800 support 800G, the industry has overwhelmingly chosen OSFP for AI deployments. The primary reasons are superior thermal performance and better support for 100G-per-lane signal integrity. QSFP-DD800 typically operates at 14–16W and risks thermal throttling, whereas OSFP comfortably handles 15–17W+.

Once NVIDIA announced OSFP as the standard for ConnectX-7 NICs and Quantum-2 switches, the form factor for AI infrastructure was thereby set on the path for OSFP. Arista, Cisco, and other major switch vendors in their own environment designed native OSFP platforms to give hyperscale 800G fabrics all the say.

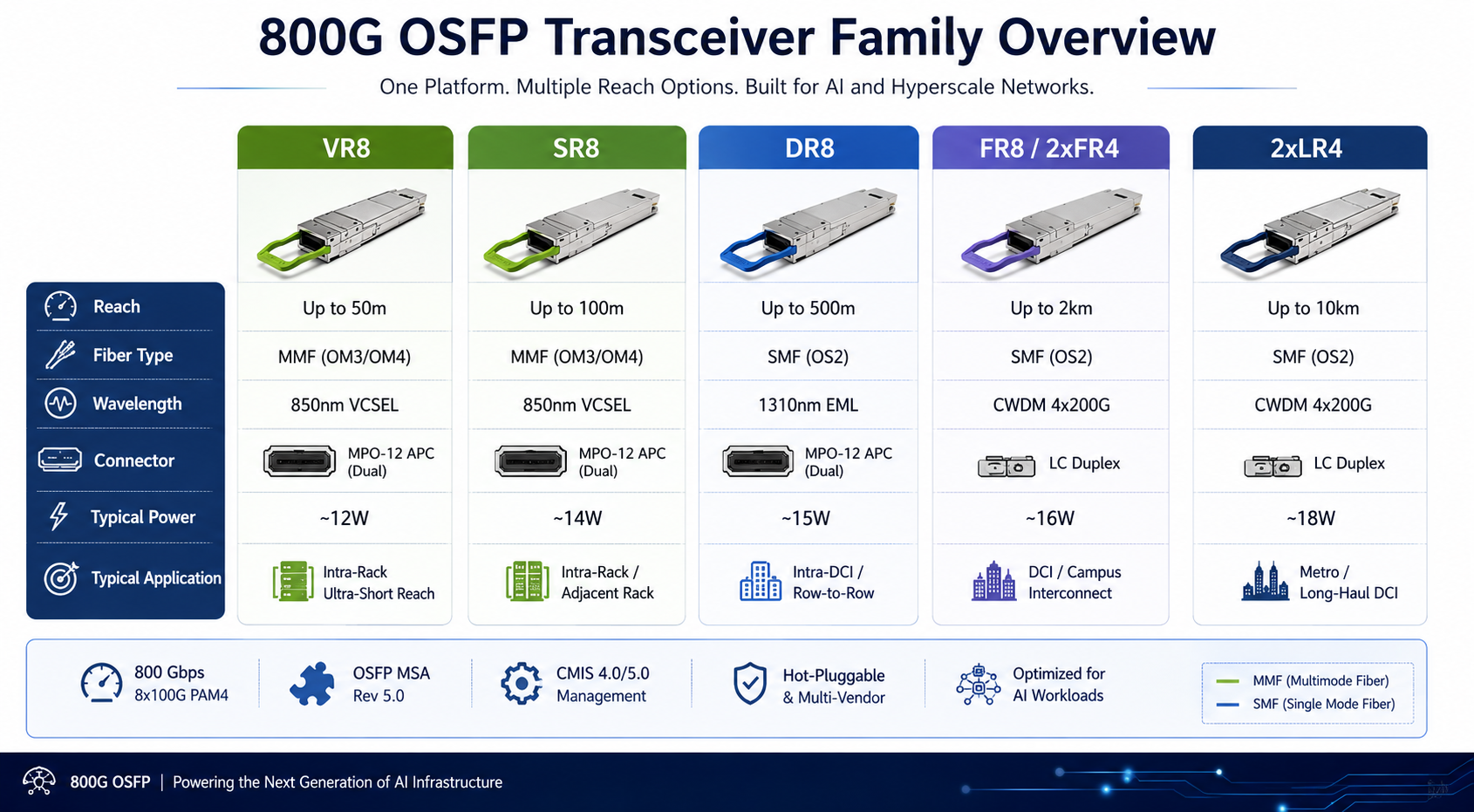

The 800G OSFP family includes six major variants covering reaches from 50 meters to 10 kilometers. Choosing the right module depends on your fiber infrastructure, link distance, and application.

The OSFP SR8 is the standard short-reach 800G module for intra-rack and intra-row deployments. It uses eight 100 Gbps PAM4 lanes over multimode fiber, with dual MPO-12 APC connectors carrying 16 fibers (8 transmit, 8 receive).

| Specification | Value |

| Reach | 100m (OM4) / 60m (OM3) |

| Wavelength | 850nm VCSEL |

| Connector | Dual MPO-12/APC |

| Power | Approximately 14W |

| Modulation | 8 x 100 Gbps PAM4 |

| Compliance | IEEE 802.3df, OSFP MSA Rev 5.0 |

The SR8 is the dominant choice for AI server-to-leaf connections within the same rack or adjacent racks. It supports breakout to 2x400G SR4 for connecting 800G switches to 400G server NICs.

800G OSFP 2xVR4 is a cost-optimized variant designed for ultra-short distance connections, typically within the same rack. It uses the same 850nm VCSEL architecture but with a reduced optical budget, enabling lower power consumption and more attractive pricing.

| Specification | Value |

| Reach | 50m (OM4) |

| Wavelength | 850nm VCSEL |

| Connector | Dual MPO-12/APC |

| Power | <15W (typically lower than SR8) |

| Modulation | 8 × 100G PAM4 |

| Compliance | IEEE 802.3df, OSFP MSA |

Besides this, 800G OSFP 2xVR4 modules support breakout into 2x 400GBASE-VR4, 4x 200GBASE-VR2, or 8x 100GBASE-VR1. It allows flexible connections into slower NICs. Its reduced optical specifications also mean lower cost and reduced power consumption (typically: 12W).

The OSFP DR8 is the most versatile 800G module for spine-leaf architectures and cross-row connections. It transmits eight 100 Gbps PAM4 signals over single-mode fiber at 1310nm, reaching 500 meters through dual MPO-12 APC connectors.

| Specification | Value |

| Reach | 500m (OS2 SMF) |

| Wavelength | 1310nm |

| Connector | Dual MPO-12/APC |

| Power | Approximately 14-16W |

| Modulation | 8 x 100 Gbps PAM4 |

| Compliance | IEEE 802.3df, OSFP MSA Rev 5.0 |

DR8 modules typically support breakout to 2x 400G DR4 or 8x 100G DR for connecting 800G spine switches to lower-speed leaf or border switches. A DR8+ variant extends the reach to 2km for longer single-mode links.

Looking for the right 800G OSFP module type for your network? Request a quote and our optical engineers will recommend the optimal variant for your application.

The 800G OSFP FR8 module (also marketed as 2xFR4) uses dual CWDM4 wavelength sets to transmit two independent 400 Gbps streams over duplex LC fiber, reaching 2 kilometers. It is the most fiber-efficient 800G option for data center interconnects.

| Specification | Value |

| Reach | 2km (OS2 SMF) |

| Wavelength | Dual CWDM4 (1271, 1291, 1311, 1331nm) |

| Connector | Dual duplex LC |

| Power | Approximately 16W |

| Modulation | 2 x (4 x 100 Gbps PAM4 with CWDM) |

| Compliance | IEEE 802.3df, OSFP MSA Rev 5.0 |

Because 2xFR4 carries two independent 400G FR4 signals, it can interconnect two distinct 400G FR4 endpoints without breakout cables. This makes it ideal for cost-optimized 800G DCI deployments where two 400G links between the same endpoints are needed.

For longer-haul applications, the OSFP 2xLR4 module extends 800G capability to 10 kilometers. It uses dual CWDM4 wavelength multiplexing over duplex LC fiber, similar to 2xFR4 but with higher launch power and improved receiver sensitivity for the extended reach.

| Specification | Value |

| Reach | 10km (OS2 SMF) |

| Wavelength | Dual CWDM4 (1271-1331nm) |

| Connector | Dual duplex LC |

| Power | Approximately 18W |

| Modulation | 2 x (4 x 100 Gbps PAM4 with CWDM) |

| Compliance | IEEE 802.3df, OSFP MSA Rev 5.0 |

2xLR4 modules are deployed for metro aggregation, inter-building enterprise WAN, and 5G transport applications where 800G capacity is needed over multi-kilometer fiber spans.

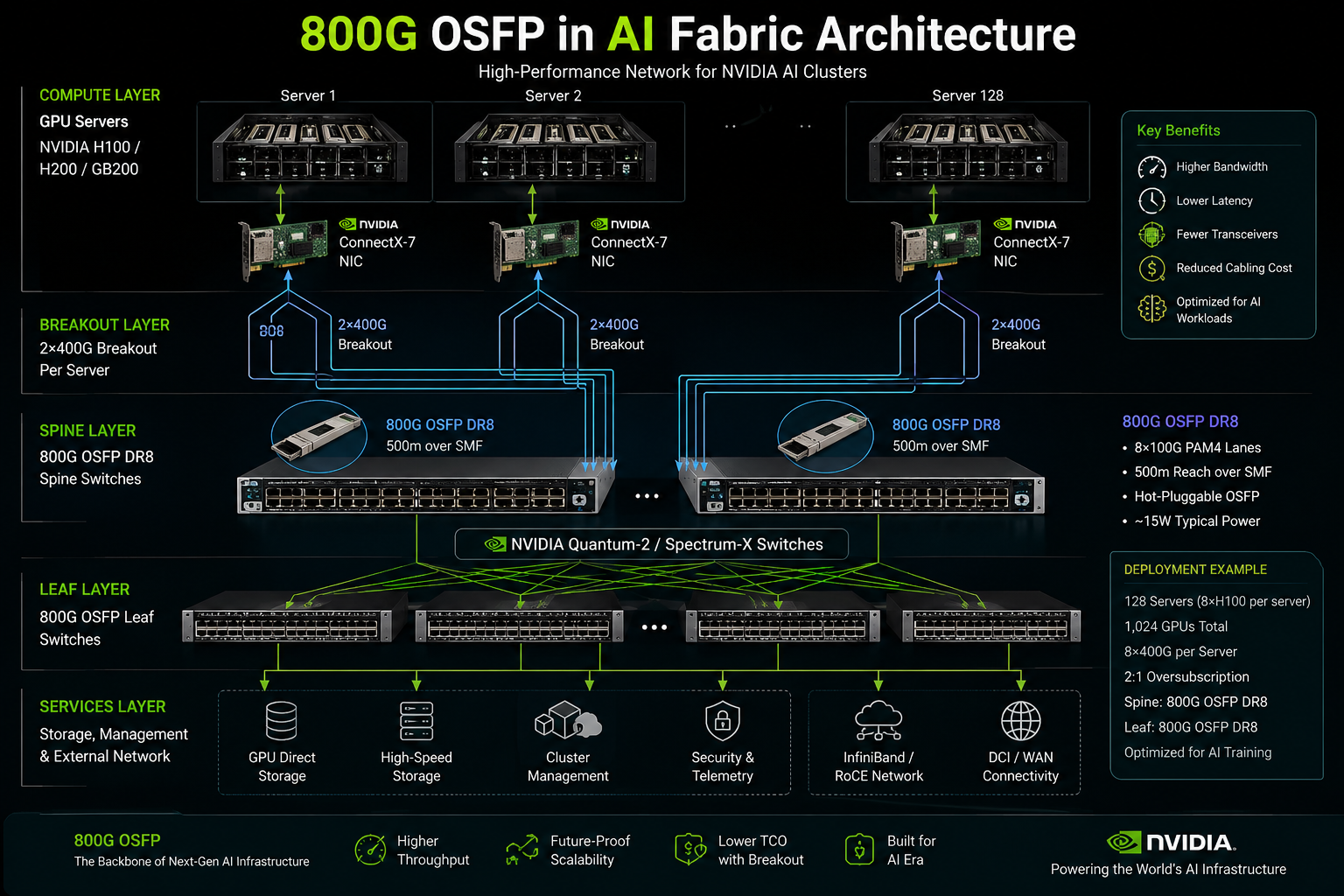

If AI is driving the transition to 800G networking, NVIDIA is one of the biggest reasons OSFP has become dominant.

Modern GPU clusters require:

To support these requirements, NVIDIA has heavily adopted OSFP across its networking ecosystem.

Platforms such as:

all rely heavily on OSFP-based optical infrastructure.

In a typical AI fabric architecture:

Compared with traditional 400G architectures, this design dramatically reduces:

This is one of the key reasons hyperscale AI operators are rapidly accelerating 800G OSFP adoption.

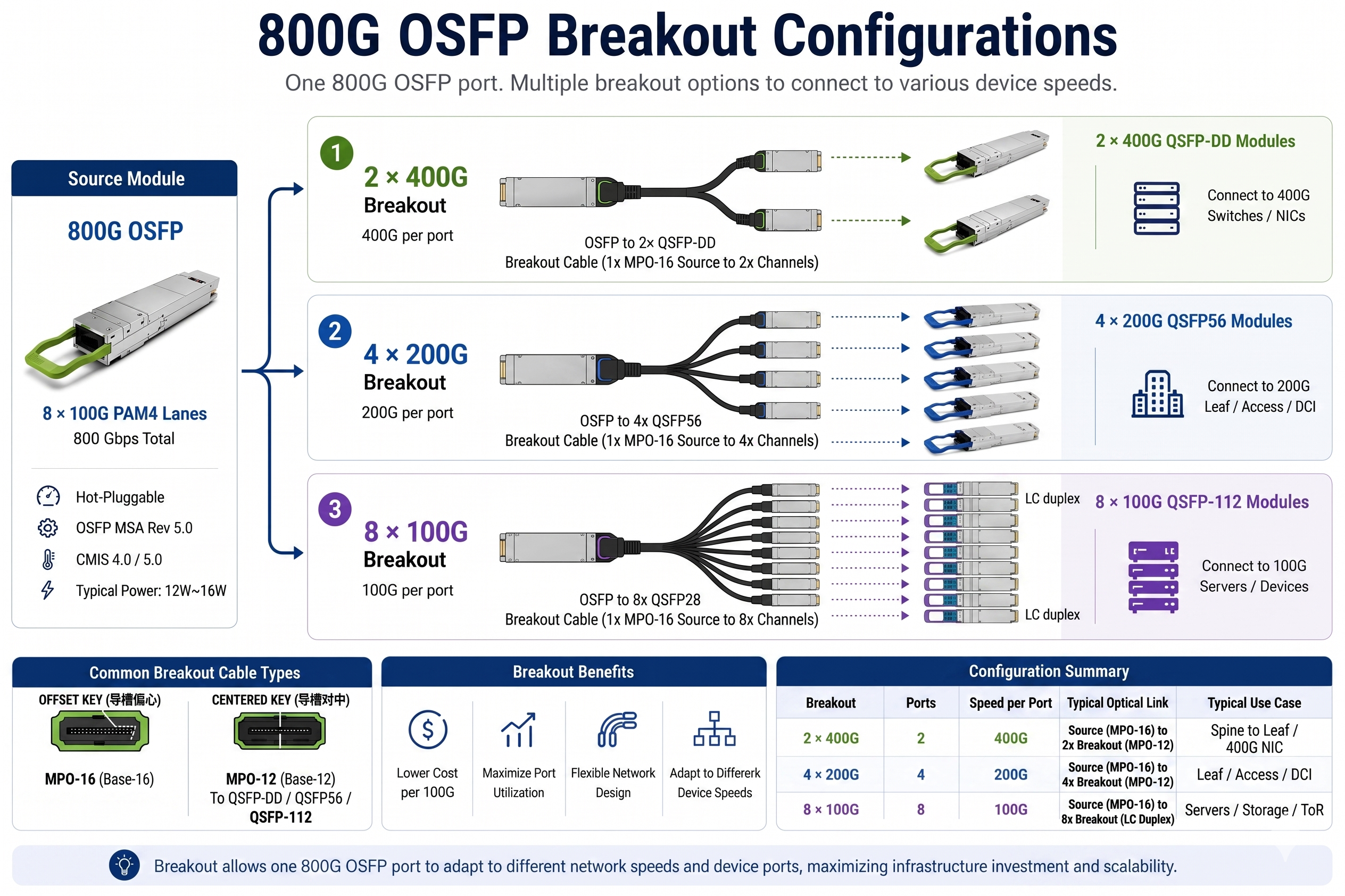

Many people assume the primary advantage of 800G networking is simply higher speed. In reality, one of the biggest benefits is breakout flexibility.

An 800G OSFP port can typically break out into:

This enables:

In AI fabrics, the most common architecture is: 800G to 2×400G breakout. In this design, a single 800G spine port connects directly to two 400G leaf or server connections.

Another widely used configuration is: 800G to 8×100G breakout. This is especially useful for:

For hyperscale operators, breakout efficiency often delivers more practical value than raw bandwidth increases alone.

The choice between 800G OSFP and QSFP-DD800 generally depends on your choice of switch platform vendor and if your power budget allows. Both form factors offer 800G and use 8x100G PAM4 signaling. In thermal capacity and ecosystem support, they vary considerably.

QSFP-DD800 retains the QSFP-DD form factor so it can offer some backward compatibility with previously installed QSFP-DD400 modules and a slightly higher port density. The smaller form factor, however, limits maximum power dissipation to about 15-17 watts only, limiting the module types that could be deployed without raising the added heatality-associated risks of thermal throttling in dense deployments.

OSFP’s larger body supports 20+ watts comfortably. This makes it the safer choice for the highest-power 800G variants like 2xLR4 (18W typical) and emerging coherent 800ZR modules. For comparison, see our QSFP-DD vs OSFP comparison guide for a complete decision framework.

Choose OSFP when:

Choose QSFP-DD800 when:

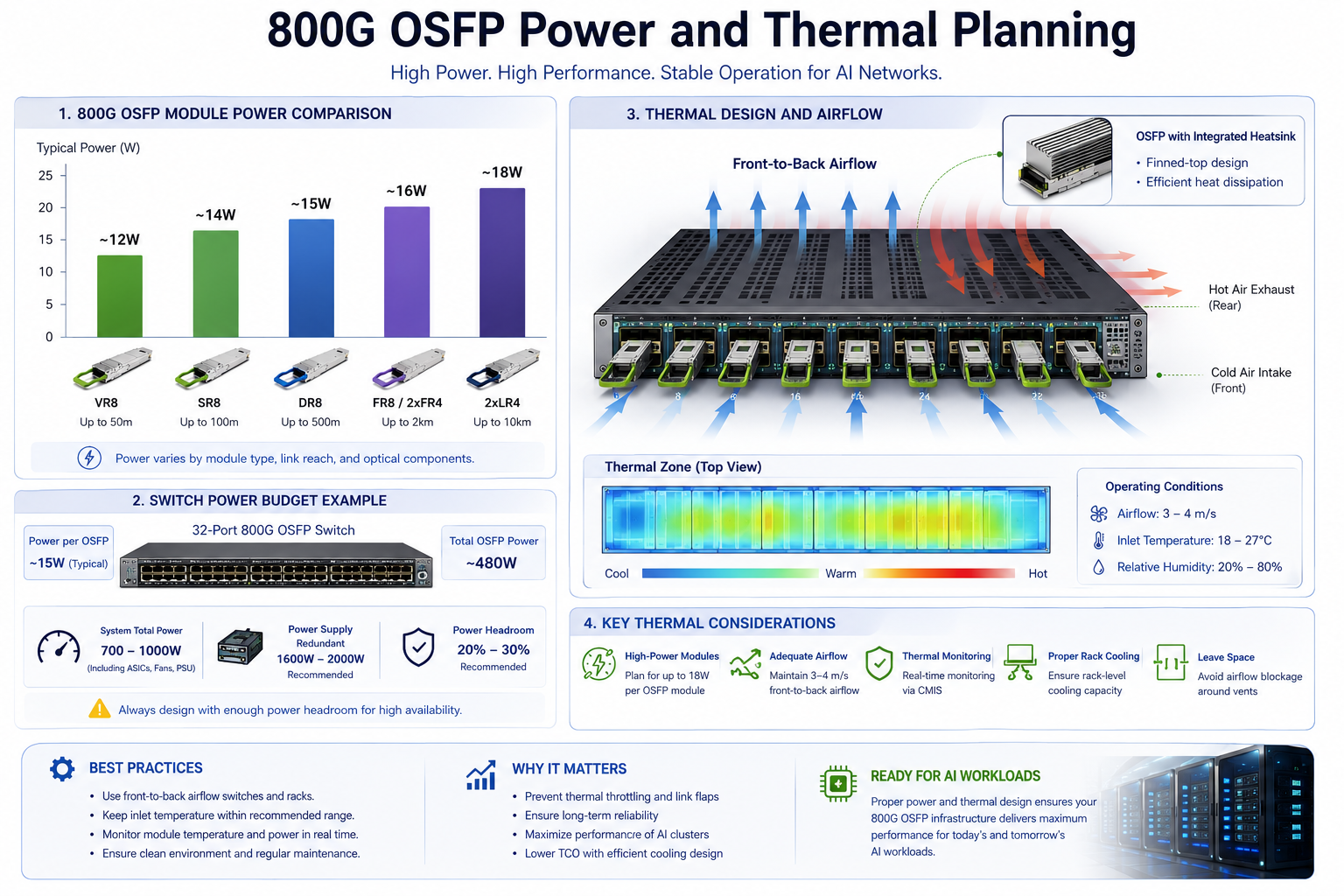

Power consumption and thermal management have become some of the most important engineering considerations in real-world 800G OSFP deployments. Compared with earlier 100G and 400G generations, 800G optics operate at significantly higher power levels, especially in AI fabrics and high-density hyperscale switches running sustained east-west traffic workloads.

Typical 800G OSFP module power consumption ranges from approximately 12W for VR8 modules to 18W or higher for long-reach 2xLR4 optics. In fully populated 800G switches, the total optical power load can quickly scale into several hundred watts, making airflow and rack-level cooling critical parts of network design.

| Module Type |

Typical Power |

Maximum Power |

| 800G OSFP VR8 |

12W |

14W |

| 800G OSFP SR8 |

14W |

16W |

| 800G OSFP DR8 |

15W |

17W |

| 800G OSFP FR8 |

16W |

18W |

| 800G OSFP 2xLR4 |

18W |

20W |

A fully populated 32-port 800G OSFP switch using DR8 modules may consume approximately 480–550W for optics alone, depending on module configuration and operating conditions. With higher-power optics such as 2xLR4, total optical power consumption can exceed 575W.

When combined with modern switch ASIC power consumption, overall system power for a single 800G switch commonly reaches 700–1,000W or more.

In AI training environments, power density increases rapidly at the rack level. For example, an 8-switch AI fabric pod may require roughly 6–8kW of continuous power for network switches and optical modules alone, excluding GPU server power consumption. A single NVIDIA DGX H100 or similar AI server platform can independently consume multiple kilowatts under sustained training workloads.

As AI cluster density continues to scale, thermal planning is becoming just as important as switch bandwidth or port count.

Many 800G OSFP modules are available in two thermal designs: flat-top and finned-top.

Finned-top OSFP modules include integrated heatsink fins that improve airflow interaction and heat dissipation, making them better suited for high-power or sustained-load environments. These variants are commonly recommended for:

However, thermal compatibility depends heavily on switch airflow design. Some switches are validated only for specific OSFP thermal classes or heatsink profiles. Before deployment, always verify:

based on the switch vendor’s hardware documentation.

The OSFP MSA defines airflow and thermal recommendations to maintain stable module operation under high-density conditions. In practice, most 800G OSFP platforms require strong front-to-back airflow and carefully controlled intake temperatures to sustain optimal performance.

Insufficient airflow or elevated inlet temperatures may lead to:

These issues become especially important in AI and HPC environments where switches often operate continuously at very high utilization levels.

For deeper power planning guidance, see our QSFP-DD power consumption guide which covers similar principles for the previous-generation form factor.

One of the most important features in addition to 800G OSFP is the breakout capability. A single 800G port can fan out to lower-speed connections, thereby improving switch port economics.

The most common breakout patterns are 800G OSFP DR8 (or SR8) to 2 x 400G links. This allows an 800G spine switch port to be connected to two 400G leaf switches or two 400G server NICs directly at 100% port utilization of the spine switch.

Cable required: MPO-16 to dual MPO-8 harnesses or MPO-12 to dual MPO-12 harnesses (depending on the module type).

For maximum flexibility, 800G OSFP can break out to eight independent 100G connections. This is the dominant pattern for connecting 800G AI cluster spines to 100G QSFP28 or QSFP112 server NICs.

Required cable: MPO-16 (or dual MPO-12) to eight duplex LC harness cables.

Breakout can dramatically decrease the dollars per 100G for traffic. A pair of 800G OSFP SR8 Modules configured in an 8 x 100G breakout format will amount to 35-45 percent less than 16 by 100G modules for the same amount of total bandwidth. Management opens up through fewer switch ports, less tricky fiber maintenance, and fewer modules.

Not every switch supports 800G OSFP today. Verify platform compatibility before procurement.

| Vendor | Platform | 800G OSFP Ports |

| Arista | 7060X6 / 8000 Series | 32 ports per 1U |

| Cisco | 8100 / 8200 Series | 32 ports per 1U |

| NVIDIA | Quantum-2 (QM9700/QM9790) | 32 ports per 1U (InfiniBand) |

| NVIDIA | Spectrum-X (SN5600) | 64 ports per 2U (Ethernet) |

| Juniper | PTX10008 / QFX5200 | Roadmap 2026 |

For broader platform context, see our QSFP28 compatible switches guide covering switch compatibility principles that also apply to higher-speed form factors.

A cloud provider builds an AI training pod with 256 NVIDIA H100 GPUs across 16 servers. Each server runs 16 transceivers, requiring 256 connections total. Using 800G OSFP DR8 modules at the spine with 2x400G breakout, the deployment needs:

A local ISP launch called LiWei in March 2025 did the interconnection between two data centers which were 8 kilometers apart by deploying 800G OSFP 2xLR4 modules. Earlier, for this interconnection infrastructure, four CFP DCO transponders costing up 80W were in use. Bringing 800G OSFP in place eliminated the whole transponder shelf, took back 3 rack units, lowered power consumption by 65%, and integrated fine with the existing router.

A Telecom operator, at the highest level, in the configuration of constructs is a new data center right across the world with a 400G QSFP-DD at leaf-layer, us- ing 800G OSFP at the spine layer. servers of 800G is connected to the leaf switches using 2x400G breakout- thus providing scalable capacity with protecting investments in existing set-ups of 400G modules and switches at the leaf level.

The next major jump from 800G is 1.6T with commercial volumes expected to start shipping come 2026-2027. The OSFP format is well-positioned for conversion to OSFP-XD once OSFP-XD, which doubles electrical lanes from 8 to 16 but maintains the same module footprint.

OSFP-XD modules use 16 lanes of electrical traffic, running at speeds of 100 Gbps PAM4 each (or 200 Gbps PAM4 in the case of types enabling ultra-high-density 3.2T), which constitute an aggregate throughput of 1.6T. The upcoming module types will include 1.6T SR16, DR16, FR8, and LR8 variants.

For the 1.6 T deployments, a total power and cooling of 25-30 W is the upper limit if the cooling also includes air, thus pushing the boundaries. Some hyperscale operators are considering liquid cooling for 1.6 T deployments, while others are exploring whether on-board optics (OBO) and co-packaged optics (CPO) can reduce module power.

Units bought in 2026 will definitely need to support 800G OSFP at the very least; however, sight should be kept to an even advanced equipment, namely OSFP-XD. 6T. Today, networks based on the use of OSFP have the coherent potential to evolve to 1.6T without the need to change form factors.

The 800G OSFP transceiver has become the foundation of modern AI infrastructure and hyperscale networking. By combining 8x100G PAM4 electrical signaling with optimized optical architectures, the OSFP form factor delivers 800 gigabits per second of bandwidth with the thermal headroom needed for sustained AI workloads.

Here is what to remember:

Whether you are building an AI training cluster with NVIDIA H100 GPUs, upgrading a hyperscale spine, or extending metro DCI to 800G, the 800G OSFP transceiver provides the speed, power efficiency, and ecosystem support modern networks demand.

Ready to source 800G OSFP transceivers for your network? Contact AscentOptics for MSA-compliant SR8, DR8, FR8, 2xLR4, and VR8 modules, factory-direct pricing, and free platform compatibility verification.

A: In a comparison of both form factors in respect to the data rates they can sustain, the OSFP form factor was designed with improved thermal features and higher density of ports. OSFP transceivers may have independent channel thermal control to allow for better heat dissipation compared to the more established QSFP-DD (Quad Small Form-factor Pluggable Double Density) form factor, which might struggle with thermal issues at 800G.

The 800G OSFP transceiver series primarily loads the next-generation data center spine-leaf switches, cloud infrastructure, and AI/ML clusters. It is required for interconnects that have ultra-high bandwidth for carrying out massive data processing tasks.

The transmission distances for 800G OSFP transceivers vary depending on the optical interface chosen. The most common ones include SR4 (Short Reach – up to 100 meters over multimode fiber) and DR4 (Data Center Reach – typically up to 500 m over single-mode fiber), with long reach variants like the LR4 for linking up across the same campus or facility.

A: Its main focal points are largely increased bandwidth capacity by not doubling the physical number of ports, utilizing fewer power per bit compared to the legacy modules, and future-proofing the network infrastructure. The form factor also fits higher switch port density enabling network operators to scale throughput in a rack space-constrained environment.