An AI infrastructure architect at a hyperscale cloud provider faced a critical procurement decision that would impact her team’s GPU cluster economics for the next three years. The project required 128 NVIDIA DGX H100 servers (1,024 H100 GPUs) interconnected via NVIDIA Quantum-2 InfiniBand NDR switches. At NVIDIA list pricing, the transceivers alone exceeded $1.5 million. By validating compatible third-party OSFP modules with ConnectX-7 NICs, her team reduced costs by $850,000 — a 55% savings — without any compromise in link quality or Bit Error Rate performance.

This is the reality of procuring OSFP modules for large-scale NVIDIA InfiniBand deployments. NVIDIA’s official optical portfolio is extensive and expensive. While their technical requirements are comprehensive, third-party alternatives can offer significant savings if properly validated. The success of your cluster deployment often hinges on correct form factor selection, accurate SKU matching, and appropriate cable choices.

This guide explains how OSFP transceivers work in NVIDIA InfiniBand NDR networks, which SKUs are required for ConnectX-7 NICs and Quantum-2 switches, the critical differences between IHS and RHS form factors, and how to evaluate third-party options for maximum cost savings while maintaining full compatibility.

Need OSFP modules tested for NVIDIA ConnectX-7? Explore our OSFP catalog for MMA4Z00-NS compatible modules and platform validation.

NVIDIA selected the OSFP form factor for its NDR (400G InfiniBand) ecosystem because it provides the thermal headroom and signal integrity required for 100G PAM4 lane operation. Each NDR link operates at 400Gb/s using 4 × 100G PAM4 electrical and optical lanes.

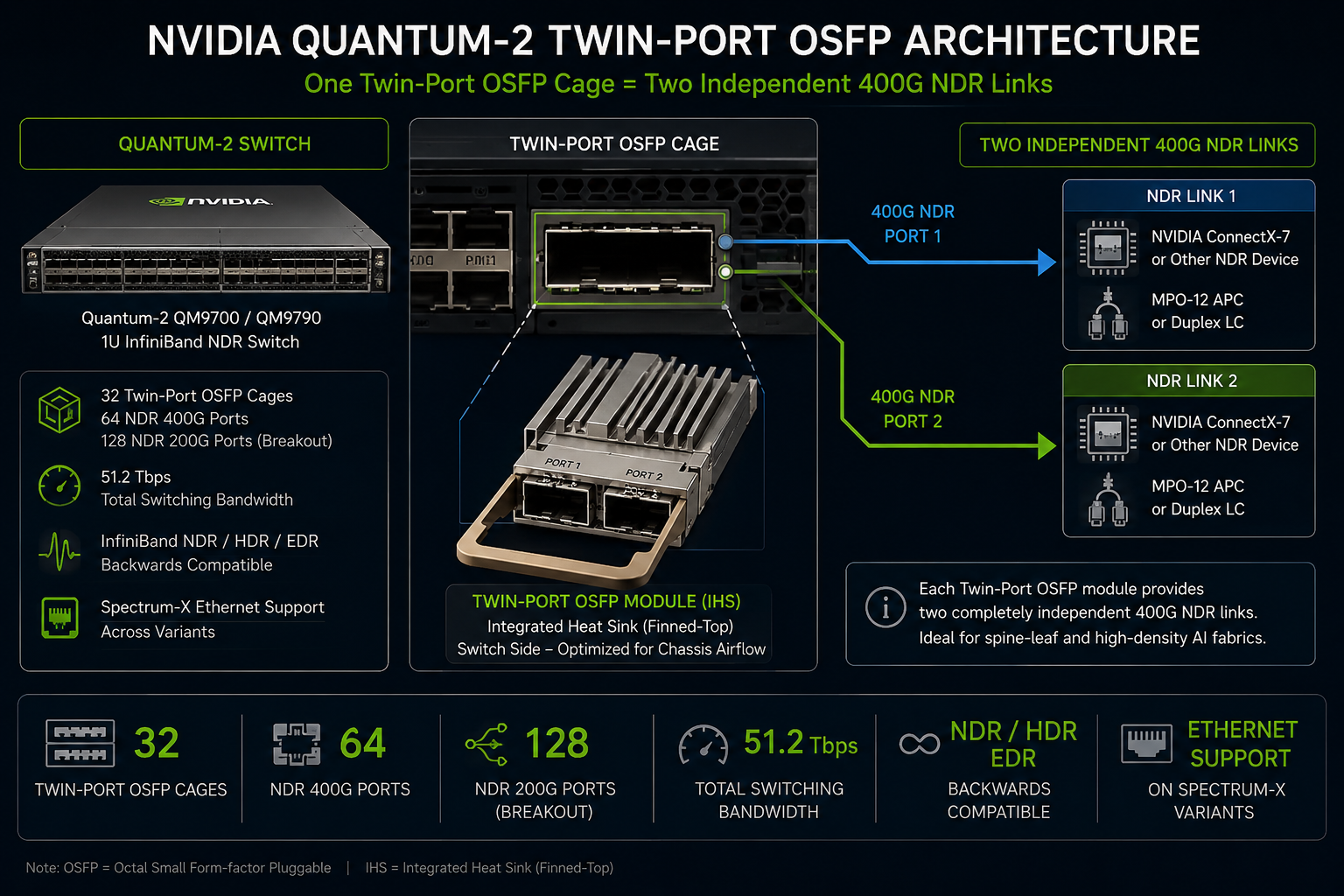

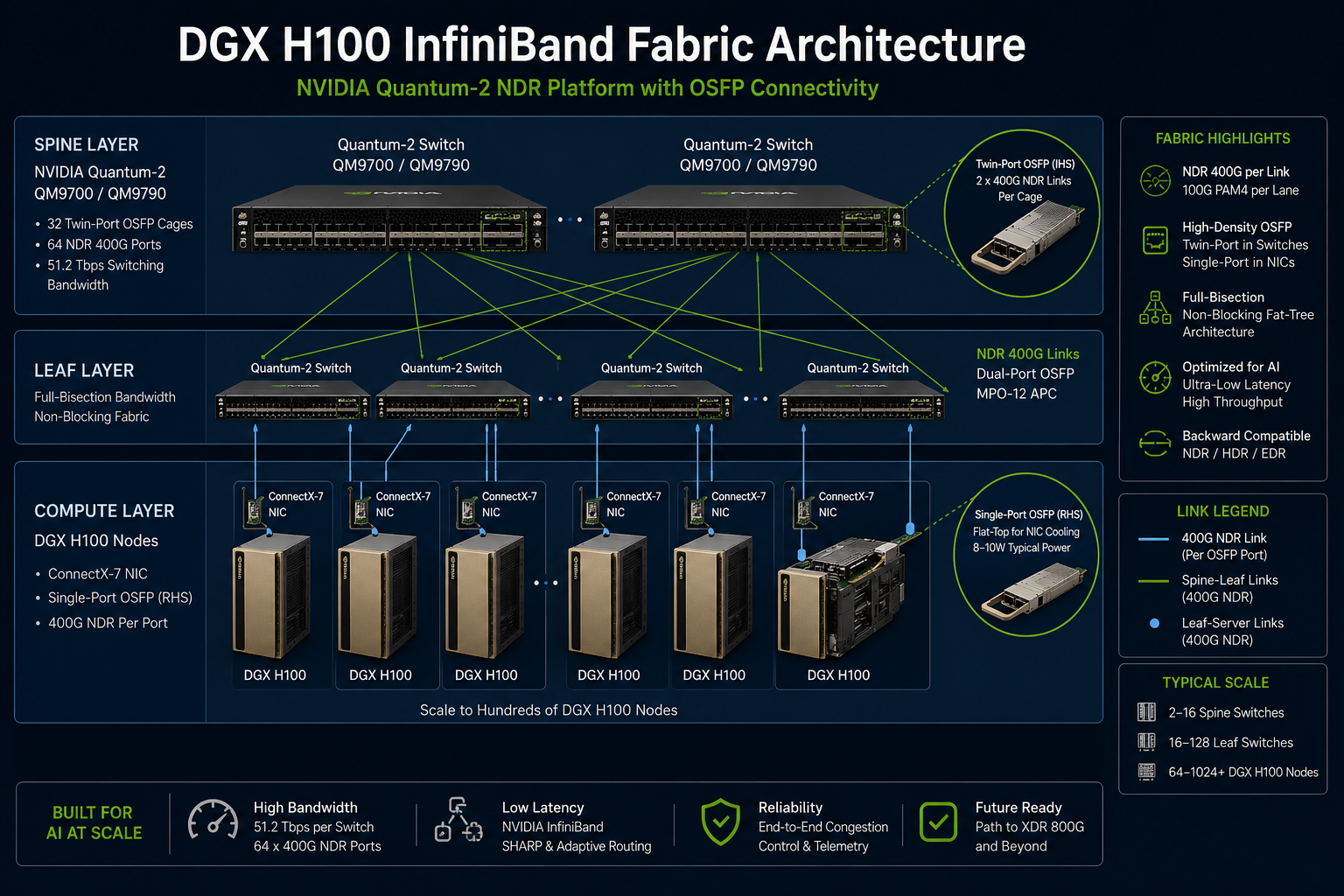

On Quantum-2 switches, NVIDIA uses a unique twin-port OSFP implementation in which a single OSFP cage carries two independent 400G InfiniBand links, enabling extremely high port density within a 1U chassis.

The NVIDIA Quantum-2 platform includes the QM9700 and QM9790 InfiniBand switches, each providing 32 twin-port OSFP cages in a compact 1U form factor. These cages support up to 64 NDR 400G InfiniBand ports or 128 NDR200 connections through breakout configurations.

The platform delivers up to 51.2Tb/s of aggregate bidirectional switching bandwidth and is optimized for large-scale AI and HPC fabrics.

NVIDIA ConnectX-7 adapters are designed for NDR 400G InfiniBand and 400GbE deployments. The platform supports PCIe Gen5 x16 connectivity and is available with either OSFP or QSFP112 network interfaces.

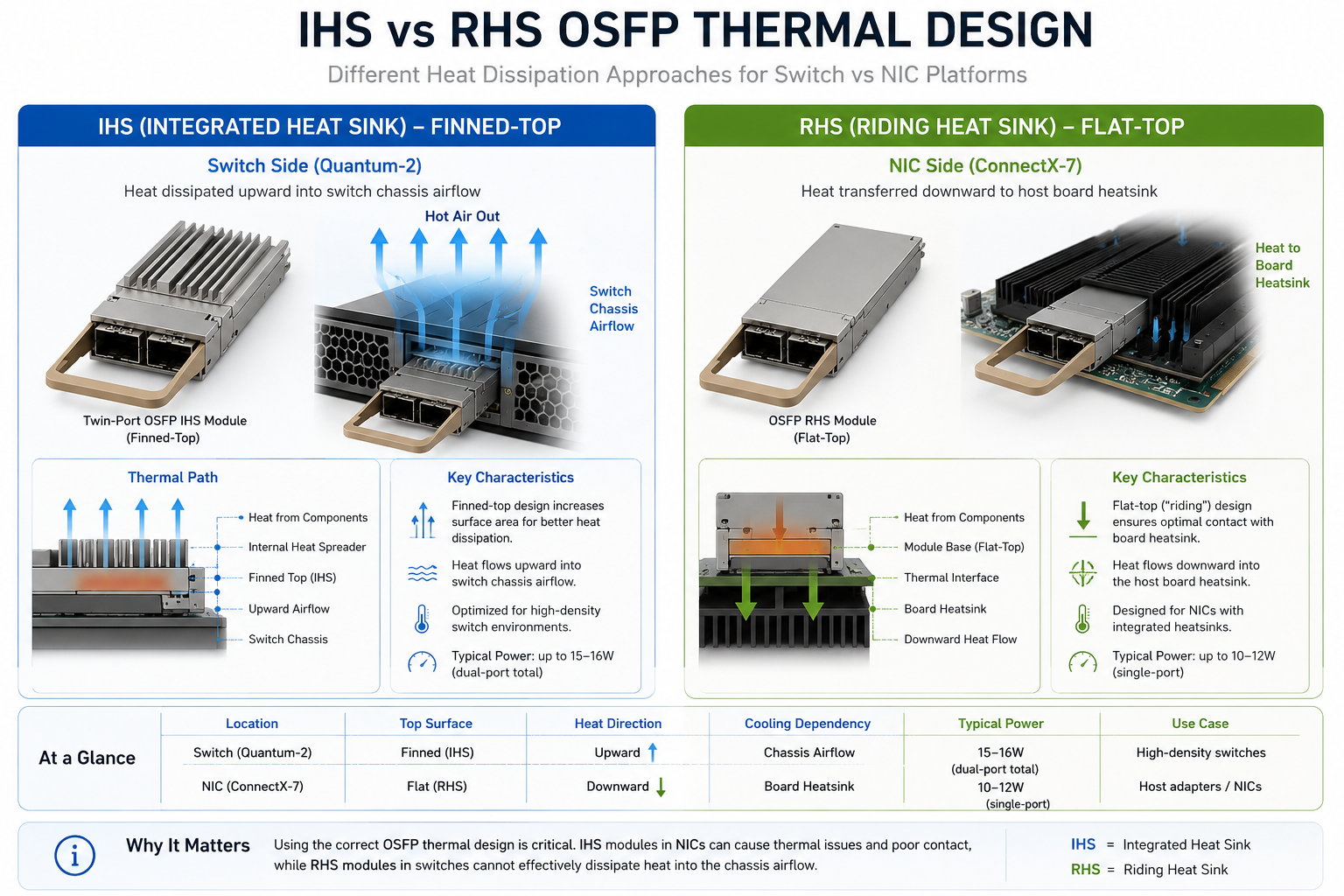

For OSFP-based configurations, ConnectX-7 adapters use flat-top RHS (Riding Heat Sink) transceivers. In this design, thermal dissipation is handled primarily by the NIC’s integrated heatsink structure rather than by fins on the module itself.

NVIDIA adopted OSFP for NDR InfiniBand primarily because of its superior thermal and power handling capabilities. Compared with QSFP-DD, OSFP provides additional thermal headroom, making it more suitable for sustained 100G PAM4 lane operation in dense AI fabrics.

The OSFP ecosystem also aligned well with NVIDIA’s long-term roadmap toward higher-density 800G and future 1.6T interconnect architectures.

Thermal compatibility is essential when selecting OSFP modules for NVIDIA platforms. Using the wrong variant can result in modules that physically will not fit.

IHS (Integrated Heat Sink) OSFP modules are designed primarily for switch environments where airflow moves directly across finned-top transceivers. RHS (Riding Heat Sink) flat-top modules are optimized for NIC environments in which cooling is handled by the adapter’s integrated thermal assembly.

Although both variants share the same electrical signaling architecture, they are not mechanically or thermally interchangeable in most NVIDIA deployments.

NIC-side modules use a flat-top design optimized to work under the heatsink of ConnectX-7, ConnectX-8, and BlueField-3 cards. These single-port modules support 400G (MPO-12 APC or duplex LC) with power consumption of 8W at 400G and 5.5W at 200G.

IHS (finned) modules are too tall for NIC cages, while flat-top RHS modules lack sufficient cooling in switch chassis designed for IHS airflow. Mixing them leads to physical fitment or thermal issues.

In one real-world case, a national research lab engineer ordered finned IHS modules for both switch and DGX H100 server sides, causing physical incompatibility and a 10-day delay. Always match the thermal profile to the platform.

Need help selecting the right OSFP variant? Contact our optical engineers for platform compatibility verification before ordering.

ConnectX-7 NICs also support QSFP112 receptacles for 400G NDR connectivity. QSFP112 uses the same 4×100G PAM4 architecture. NVIDIA’s MMA1Z00 series covers both OSFP and QSFP112 variants with identical optics and performance. The choice depends on the specific NIC port design and surrounding equipment.

NVIDIA uses a consistent SKU naming convention that encodes form factor, fiber type, and reach.

The MMA4Z00-NS series represents NVIDIA’s twin-port OSFP transceivers for Quantum-2 InfiniBand switches.

Rather than operating as a single 800G Ethernet optical link, these modules carry two independent 400G NDR InfiniBand connections within a shared OSFP form factor.

Typical characteristics include:

The MMA1Z00-NS400 family supports single-port 400G NDR connectivity for ConnectX-7 adapters. This flat-top RHS variant utilizes the same 850 nm VCSEL technology employed in twin-port modules; it uses MPO-12 APC connectors up to 50 m over OM4.

Eight watts of power is consumed at 400G, 5.5W at 200G. The MMA1Z00 designation also applies to variants in the QSFP112 form factor that share identical optics.

MMS4X00-NS is designed for either 500-meter or perhaps 2 km long single-mode OSFP variant fiber-optic cables capable of linking to single-port 800G Ethernet. MMS1X00-NS400 is same with the single-port version for NIC.

Single-mode modules are generally adopted for cross-row links across large data center models, or if the multimode transmission fiber is becoming too limited for campus-size AI cluster fabrics.

| SKU Series | AscentOptics P/N | Form Factor | Speed | Fiber | Reach | Power |

|---|---|---|---|---|---|---|

| MMA4Z00-NS | O12-800M885-5TCX | Twin-port OSFP IHS | 2 × 400G NDR | OM4 MMF | 50m | 15W |

| MMA4Z00-NS-FLT | O12-800M885-5TCX-FT | Twin-port OSFP RHS | 2 × 400G NDR | OM4 MMF | 50m | 15W |

| MMS4X00-NS | O12-800M885-1HCX | Twin-port OSFP IHS | 2 × 400G NDR | OS2 SMF | 100m | 16W |

| MMS4X00-NS-FLT | O12-800M885-1HCX-FT | Twin-port OSFP RHS | 2 × 400G NDR | OS2 SMF | 100m | 16W |

| MMS4X00-NM | O12-800S831-5HCX | Twin-port OSFP IHS | 2 × 400G NDR | OS2 SMF | 500m | 16W |

| MMS4X00-NM-FLT | O12-800S831-5HCX-FT | Twin-port OSFP RHS | 2 × 400G NDR | OS2 SMF | 500m | 16W |

| MMA4Z00-NS400 | O56-400M485-1HCM | OSFP | 400G NDR | OM4 MMF | 50m | 8W |

| MMS4X00-NS400 | O56-400S431-5HCM | OSFP | 400G NDR | OS2 SMF | 500m | 10W |

For deeper context on 800G OSFP variants, see our 800G OSFP transceiver guide.

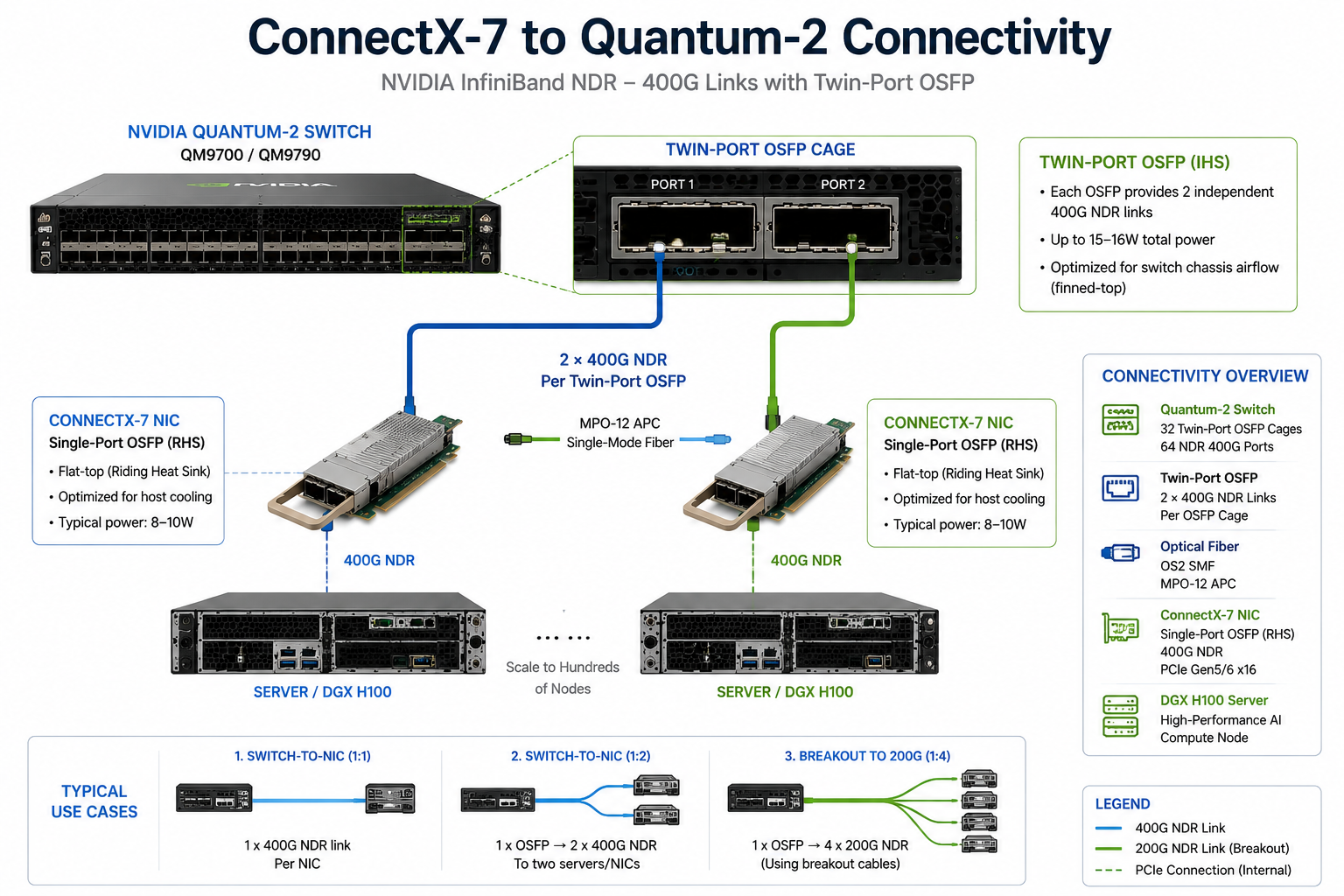

NVIDIA’s twin-port OSFP architecture enables three primary connectivity patterns. Understanding these is essential for cable harness planning and bandwidth optimization.

Two Quantum-2 switches can be interconnected using twin-port OSFP modules and parallel fiber connections.

This deployment model is commonly used for:

One of the most popular deployment patterns is one where a Quantum-2 switch port connects with two separate ConnectX-7 NICs at 400G each. The Switch twin-port OSFP runs over two straight MFP7E10 fiber cables attached to single-port OSFP (MMA4Z00-NS400) or QSFP112 (MMA1Z00-NS400) at the NIC side.

This pattern is dominant for connecting a single 800G switch port with two GPU servers, in a deployment that quadratically increases port count not the switch hardware costs.

For the densest NIC configuration, a double port OSFP could connect to four ConnectX-7 NICs, each at 200G, using the 1 to 2 splitter fiber cables (MFP7E20-Nxxx). Each splitter creates 2 x 200G connection from one of the 400G pairs, thus using only two lanes per direction.

In this arrangement, the 400G NIC transceivers will switch to 200G operation mode immediately on detecting that the lane count has reduced. The power consumption per 400G transceiver gradually drops from 8 W to 5.5W. The double-port OSFP switch remains at 15W regardless of the situation.

NVIDIA DGX H100 systems integrate multiple ConnectX-7 adapters to provide high-bandwidth InfiniBand connectivity for AI training clusters. Server-side OSFP ports typically require flat-top RHS transceivers to align with the server’s integrated thermal design.

Switch-side Quantum-2 ports continue to use finned-top IHS modules optimized for chassis airflow.

The transition from H100 to Blackwell-generation AI systems significantly increases east-west network bandwidth requirements, driving higher-density InfiniBand fabric deployments and accelerating demand for 800G-class optical interconnects.

The NVIDIA InfiniBand ecosystem supports four cable types. The right choice depends on reach, cost, and power budget.

| Cable Type | Reach | Cost | Power | Best For |

| DAC (Direct Attach Copper) | <=1.5m | Lowest | Lowest (passive) | Intra-rack connections |

| ACC (Active Copper Cable) | <=3m | Low | Low (~1W) | Adjacent rack connections |

| AOC (Active Optical Cable) | <=50m | Medium | Medium (~8W) | Mid-range with pre-terminated fiber |

| Optical Transceivers + Fiber | 50m+ | Higher | Highest (8-15W) | Long-reach, modular flexibility |

For most AI training cluster builds, optical transceivers with separate fiber cables provide the flexibility needed for changing cable plant requirements over time. Pre-terminated MFP7E10 or MFP7E20 cables simplify deployment when reach and topology are fixed.

NVIDIA’s LinkX cable ecosystem includes two primary fiber harnesses:

Both use MPO-12 APC connectors (8-degree angle). UPC connectors are not compatible with NVIDIA InfiniBand NDR. Maintain similar lengths for paired fibers to minimize latency skew (approx. 4.5 ns per meter).

Within a given link, any two fibers can have varying lengths, but both must be of the same type (straight or splitters, with never a mixture of both). The latency of a fiber runs about 4.5 nanoseconds per meter, and hence any substantial difference in length between paired fibers can create timing skew issues.

For maximum AI training performance, paired fiber lengths should be kept within a few meters of each other as much as possible.

While NVIDIA officially supports only qualified modules, the OSFP MSA ensures multi-vendor interoperability. High-quality third-party modules use the same DSP chipsets (Marvell, Broadcom, MaxLinear) and optics as OEM parts, with NVIDIA-compatible EEPROM programming.

There are partner manufacturers who procure the same DSP chipsets-Marvell/Inphi, Broadcom, MaxLinear-as well as VCSEL/EML optical ad hoc sub-assemblies as NVIDIA’s contract manufacturers. These manufacturers program the EEPROM with the NVIDIA-compatible identification data and sign off against the ConnectX-7 NICs and Quantum-2 switches.

The quality varies greatly from vendor to vendor. Certified third-party vendors test every module batch in actual NVIDIA platforms, certify CMIS compliance, and produce BER performance data that meets or exceeds the OEM specifications.

Third-party OSFP modules typically deliver 45-65% savings on large deployments. For 1,000+ modules, savings can range from $400,000 to $1.5 million. With proper validation and testing, performance matches or exceeds OEM specifications.

The trade-offs are real but manageable:

For procurement teams evaluating these trade-offs, the cost savings on capital expenditure typically far exceed any operational risk, provided you select a manufacturer with verified NVIDIA platform testing.

Need cost-effective OSFP modules for NVIDIA platforms? Request a quote for MMA4Z00-NS and MMA1Z00-NS400 compatible modules with platform validation.

The Power planning is critical in dense AI environments because optical transceivers contribute meaningful thermal load across large GPU clusters.

A fully populated Quantum-2 switch with 32 twin-port OSFP modules consumes approximately:

In a DGX-based AI rack, optical transceiver power consumption can exceed 1kW when both switch-side and server-side optics are included.

Dense AI fabrics require carefully managed airflow.

NVIDIA Quantum-2 switches are designed for high-throughput airflow environments, while DGX servers use integrated thermal designs optimized for GPU cooling and high-speed networking.

Improper module selection can lead to:

NVIDIA’s next-generation InfiniBand platform, XDR (eXtreme Data Rate), increases per-lane speeds to 200G PAM4, enabling 800G NICs and 1.6T switch ports through the same physical OSFP form factor.

NVIDIA’s future XDR InfiniBand roadmap is expected to maintain OSFP-based physical connectivity while increasing lane signaling rates to 200G PAM4. This allows existing OSFP ecosystem experience and thermal architecture to evolve toward future 800G NICs and 1.6T-class switch systems.

For many current AI training environments, NDR still provides sufficient bandwidth for:

Migration toward XDR will become increasingly important as GPU architectures continue to increase communication density and memory bandwidth.

For deeper insight into form factor decisions, see our QSFP-DD vs OSFP comparison guide which covers the broader trade-offs between competing 400G/800G architectures.

OSFP has become the foundation of NVIDIA InfiniBand NDR AI infrastructure. The combination of dual-port switch architecture, single-port NIC connectivity, and standardized MMA-series SKUs enables hyperscale GPU clusters to be deployed efficiently.

Key takeaways include:

Whether building a research cluster or a hyperscale GPU training environment, selecting the correct OSFP architecture directly impacts deployment efficiency, thermal reliability, and long-term scalability.

A: OSFP (Octal Small Form-factor Pluggable) is a high-speed optical transceiver form factor used in NVIDIA InfiniBand and Ethernet networking platforms. It supports high-bandwidth PAM4 signaling while providing improved thermal performance for dense AI and HPC environments.

A: IHS (Integrated Heat Sink) modules use finned-top cooling optimized for switch airflow, while RHS (Riding Heat Sink) modules use flat-top thermal designs intended for NIC environments with integrated cooling structures.

A: NVIDIA InfiniBand with OSFP is commonly used in AI clusters, high-performance computing environments, and modern data centers that need very fast, low-latency connections. It is well suited for workloads such as large-scale AI training, scientific simulation, and data-intensive applications, where fast communication between servers and accelerators is critical.

No. Quantum-2 uses a twin-port OSFP architecture in which one OSFP cage carries two independent 400G NDR InfiniBand links rather than a single native 800G Ethernet optical connection.

Yes. Many third-party vendors provide ConnectX-7 compatible OSFP modules that support CMIS interoperability and NVIDIA platform compatibility when properly validated.

Certain ConnectX-7 adapters support QSFP112 interfaces, allowing 400G NDR connectivity using the QSFP form factor. However, Quantum-2 switch platforms primarily use twin-port OSFP implementations.

A: OSFP-based NVIDIA InfiniBand matters because it helps deliver the fast, consistent data movement that low-latency, high-throughput workloads depend on. By supporting high-speed links in a compact form factor, OSFP enables InfiniBand hardware to connect servers, GPUs, and switches efficiently, which is especially valuable for AI training, HPC, and other performance-sensitive applications where delays and bandwidth limits can reduce overall system performance.

A: OSFP NVIDIA InfiniBand can be explained as a high-speed connection technology built for systems that need to move large amounts of data very quickly and reliably. OSFP refers to the physical module format, while InfiniBand is the networking technology behind the performance. In simple terms, it helps AI, HPC, and data center environments run faster by improving communication between servers, GPUs, and switches.